My Brain Painted a Picture While My Eyes Were Closed

NOTE: At my direction, Claude Opus did the heavy lifting in the code. I developed and laid the scaffold for what we were doing, how I wanted the UX/UI to look/feel/sound, etc. The observations here are a mix of mine and Claude’s distillation of “what happened” 🙃

It Started With a Repost

I was scrolling X yesterday morning and Brian Roemmele posted a video of something called the Human Synapse Decoder — a two-channel EEG rig that was doing real-time brain signal decoding. It was wild. I reposted it.

And then I thought: wait. I have a Muse headband somewhere.

I dug through my closet and pulled out a Muse MU-02 — the original 2016 model. Four dry electrodes, 256Hz sample rate, Bluetooth LE. It had been sitting in a drawer for years. I plugged it in to charge. It wouldn’t turn on. It was stuck in bootloader mode. I power-cycled it. It came back to life.

What happened over the next eight hours is… well, it’s a lot. Let me walk you through it.

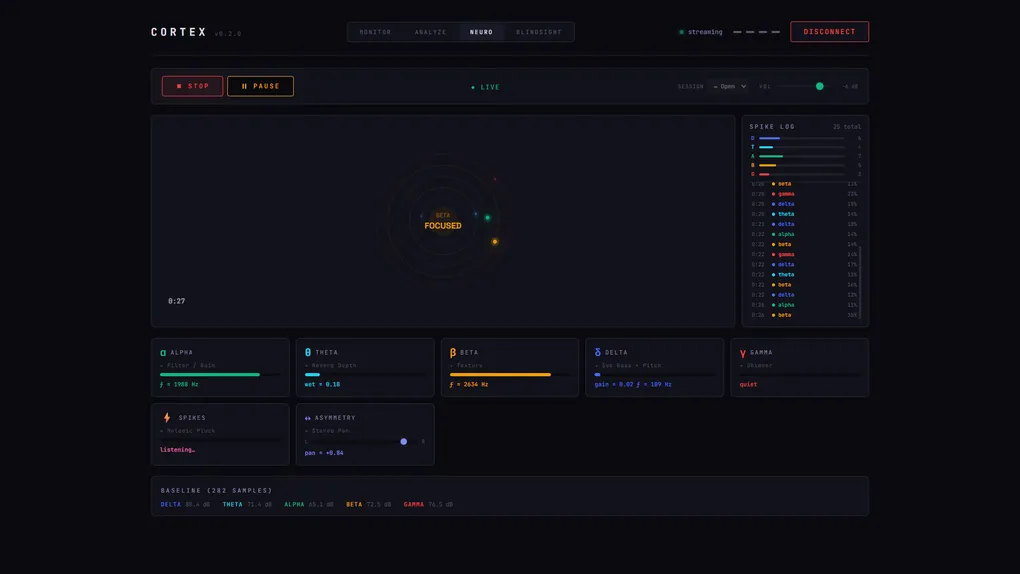

CORTEX: The Observatory

The first thing I needed was a way to see what the headband was actually picking up. Not through the Muse app — I wanted raw signal, real-time, in the browser.

So I built CORTEX.

CORTEX is a real-time EEG visualization and neurofeedback platform built in TypeScript with Vite. It connects to the Muse headband over Web Bluetooth using the muse-js library, then does all the signal processing client-side: custom FFTFast Fourier Transform — specifically a radix-2 Cooley-Tukey implementation that decomposes a time-domain signal into its constituent frequencies. on rolling 256-sample windows (one second of data at 256Hz), Hanning windowingA smoothing function applied to FFT input to reduce spectral leakage at the edges of the sample window., Power Spectral Density extraction, and frequency band decomposition into the five standard EEG bands:

- δ Delta (1–4 Hz) — deep sleep, unconscious processing

- θ Theta (4–8 Hz) — meditation, memory, drowsiness

- α Alpha (8–13 Hz) — relaxed awareness, eyes closed

- β Beta (13–30 Hz) — active thinking, focus, problem-solving

- γ Gamma (30–50 Hz) — high-level cognition, binding, peak states

All rendered in real-time on canvas with rolling buffers, session recording to CSV, and markdown report generation you can hand to your AI analyst.

The whole thing runs in Chrome. No backend. No subscription. No SDK. Just npm run dev and strap on your headband.

The First Sessions: Learning to Listen

The first recording sessions were about establishing baselines and figuring out what was real signal versus noise. A few things I learned fast:

Over-ear headphones are a no-go. My Audio-Technica studio cans sit directly on TP9 and TP10 — the ear electrodes, which happen to be the best alpha detectors on the whole headband. The electromagnetic field from the cup drivers was flooding the Gamma band. Session 1 (with headphones): Gamma at 80.5 dB. Session 2 (without): 73.7 dB. A 6.8 dB drop just from removing the cans. If you’re doing neurofeedback and wearing over-ears, your data is lying to you. IEMs or nothing.

Electrode contact matters more than you think. Skin oils are insulators. The conductive rubber on the ear sensors accumulates dust. A quick wipe of the skin and electrodes before each session dropped my peak-to-peak noise by 16%. I now do a little cleaning ritual every time.

The first 90 seconds are garbage. Electrodes need time to settle against your skin and reach a stable impedance. My peak-to-peak voltage was 48–57µV in the first minute and a half, then dropped to 22–28µV and stayed there. CORTEX now supports configurable settle-time trimming in the reports.

NEURO-ARIA: When Your Brain Makes Music

Once I had clean signal, the next question was obvious: what if the EEG data drove sound?

I already had dreamARIA — my generative audio engine that creates evolving ambient soundscapes. So I built a bridge. CORTEX streams normalized band powers over to a neurofeedback audio engine that maps brain states to Web Audio API parameters:

- Alpha power → drone volume and harmonic richness (the reward signal — deeper relaxation makes warmer, richer sound)

- Theta power → reverb depth and delay feedback (meditative states create expansive sonic space)

- Beta power → rhythmic density and high-frequency texture (active thinking adds percussive complexity)

- Frontal asymmetry (AF7 vs AF8) → stereo panning and tonal mode

Heavy exponential smoothing so it doesn’t jitter. Baseline-relative mapping so it responds to your brain, not some absolute threshold. The result is a generative soundscape that blooms and breathes with your neural activity.

I call it NEURO-ARIA. And it’s kind of mesmerizing.

Flow State, Measured

Here’s where it gets interesting.

I was running a NEURO-ARIA session — headband on, eyes open, just vibing with the sound — and I picked up my guitar. I wasn’t thinking about the experiment anymore. I was just playing.

And the sound went quiet.

Not silent. Quiet. The dense, chattering texture that had been filling the room for the first minute of the session just… dissolved. I didn’t notice it happening. I only noticed after.

Then I looked at the session report:

| Window | α | β | γ | State |

|---|---|---|---|---|

| 0:00–0:30 | 0.79 | 0.84 | 0.73 | FOCUSED |

| 0:30–1:00 | 0.50 | 0.68 | 0.63 | FOCUSED |

| 1:00–1:30 | 0.28 | 0.24 | 0.21 | DEEP |

| 1:30–2:00 | 0.44 | 0.48 | 0.43 | FOCUSED |

That 1:00–1:30 window. Every band plummets. Alpha drops from 0.50 to 0.28. Beta from 0.68 to 0.24. Gamma from 0.63 to 0.21. The system classified it as DEEP state.

That’s when I was playing guitar.

What I captured is a well-documented neurological phenomenon called transient hypofrontality — a fancy way of saying your prefrontal cortex shuts up when you’re deep in a practiced skill. When a musician locks into playing, the conscious mind stops micromanaging and motor execution gets handed off to deeper, more automatic brain structures. The frontal lobes go quiet.

The Muse electrodes sit directly on the prefrontal cortex (AF7 and AF8). They were perfectly positioned to detect exactly this.

The NEURO-ARIA audio going quiet wasn’t a bug. It was accurately sonifying the fact that my conscious mind went silent while my hands took over.

Flow state, measured and sonified in real time. With a $10 headband from 2016 and a TypeScript app running in Chrome.

I posted about it on LinkedIn. Seemed worth sharing. (It was 60°F in my studio that morning, hence the jacket and beanie in the video. Tennessee winters, man.) Here’s the short-video demonstration of that.

BLINDSIGHT: Painting With Your Eyes Closed

Okay so at this point I’ve got real-time EEG visualization, neurofeedback audio, flow state detection, and session analytics all running in the browser. The natural next question:

What if the brain data drove a paintbrush?

BLINDSIGHT is a brain-painting application. You close your eyes. The system detects the alpha spike on your frontal electrodes (alpha power increases when your eyes close — it’s one of the most reliable signals in EEG). A canvas you can’t see starts painting, driven entirely by your neural activity.

But here’s the fun part: your eyes still move behind closed lids. When you look left, AF7 deflects relative to AF8. Look right, AF8 picks up the slack. Look up or down, the mean frontal voltage shifts. It’s called electrooculography — your eyeballs produce their own voltage field — and the frontal EEG electrodes are perfectly positioned to catch it. Your eyes are joysticks behind closed lids.

Here’s the full parameter map:

- AF7 − AF8 differential → X velocity (look left → brush drifts left, look right → brush drifts right)

- (AF7 + AF8) mean shift → Y velocity (look down → brush drops, look up → brush rises)

- TP9 band ratio (θ/β) → Color/hue (meditative states produce cool blues, active states produce warm oranges)

- TP10 peak-to-peak → Brush width

- Alpha power → Opacity (deeper relaxation = bolder marks)

- Theta power → Stroke curvature (bezier control point displacement)

- Beta power → Texture/grain (stipple density)

- Jaw clench (TP9 + TP10 RMS spike) → Stamp event (a sharp burst mark on the canvas and a percussive hit in the soundscape)

All parameters mapped relative to a 15-second calibration baseline, not absolute values. Exponential smoothing prevents jitter. Every channel contributes something different to the mark-making.

You open your eyes. The painting is revealed.

You never saw it being made. Your brain painted it blind.

I added bi-axial and quad mirroring options (because symmetry is beautiful and brains are roughly bilateral), and wired up a generative soundscape that blooms when your eyes close — FM drones whose pitch follows the brush as your eyes steer it across the canvas, filter warmth that opens with wider strokes, stereo panning that tracks left-right movement, and a membrane percussion hit every time you clench your jaw. The whole thing breathing in real-time with your neural activity.

Then I let it run while I went about my afternoon.

Here’s a quick video demo of that!

The dense center of warm reds and oranges corresponds to high-opacity moments where beta was dominant — focused, active thinking pushing the hue warm while deep alpha kept the marks bold. The cooler purples and blues threading through are theta-dominant meditative moments where the mind went quiet and the palette shifted cool. The long sweeping magenta tendrils are where the brush thinned out during deeper relaxation, with wider bezier curvature from elevated theta power.

It looks like something alive. And I never saw it being made.

The Beanie Problem

One discovery from the afternoon sessions: don’t wear a beanie over your headband. I thought the extra constriction would hold the electrodes tighter. It didn’t.

What it actually did was couple the headband to a loose fabric layer, so every micro-movement of my head dragged the electrodes around. It also trapped heat, created variable sweat impedance, and generated static charge buildup. The session report showed Beta and Gamma pegged near 1.00 for almost the entire recording — that’s not peak cognitive performance, that’s sustained broadband noise from bad contact.

The rule: for BLINDSIGHT, skip the beanie. You’re sitting still with eyes closed. The headband stays put on its own.

What’s Next

The signal chain is proven. The tooling is solid. Here’s what’s on deck:

- Controlled sessions: Eyes-closed meditation, no headphones, no audio, clean skin. Get a true alpha-dominant baseline to validate the full pipeline.

- Headphone interference study: Three identical sessions — no audio, over-ears with dreamARIA, IEMs with dreamARIA — to quantify the electromagnetic contamination.

- Timelapse replay & GIF export: Record every stroke frame during a session and replay it as a time-lapse. Export the replay as an animated GIF you can share. Because what’s the point of brain-painting if you can’t show people?

- Hardware upgrade: The Muse 2 (MU-03) adds PPG heart rate sensors and an accelerometer, both supported by muse-js. Heart-rate-to-color mapping. Head-tilt detection. More data, richer paintings.

- Open source: CORTEX and BLINDSIGHT are built to be shared. The Muse MU-02 goes for under $10 on eBay. Anyone with a headband and Chrome can run this.

The original question when I started was: “Can we reappropriate this thing and do cool brainwave observation stuff, or naw?”

Naw was never an option, apparently.